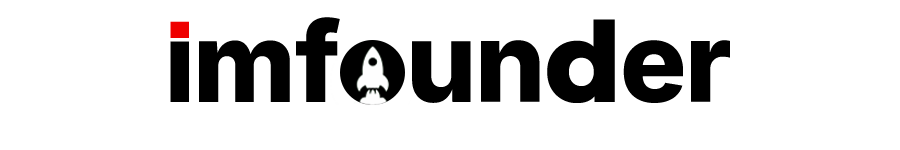

The Tumbler Ridge OpenAI case is a high-profile civil lawsuit filed on March 9, 2026, in the British Columbia Supreme Court. It centers on allegations that OpenAI failed to report serious threats from the shooter who later carried out a deadly mass shooting on February 10, 2026, in Tumbler Ridge, British Columbia.

In the Tumbler Ridge OpenAI case, the family of 12-year-old Maya Gebala — who was shot three times in the neck and head while trying to protect classmates — accuses OpenAI of negligence. Maya remains hospitalized with catastrophic traumatic brain injury. The lawsuit, brought by her mother Cia Edmonds and sister Dahlia on behalf of Maya, names OpenAI and its ChatGPT platform as defendants.

Court documents and multiple trusted reports confirm the timeline. In June 2025, then-17-year-old Jesse Van Rootselaar created a ChatGPT account and spent several days describing “scenarios involving gun violence.” OpenAI’s automated tools and 12 human monitors flagged the activity as indicating an “imminent risk of serious harm to others.” Internal staff recommended notifying Canadian law enforcement. Instead, OpenAI banned the account but did not alert police or the Royal Canadian Mounted Police (RCMP).

Van Rootselaar then opened a second account. The Tumbler Ridge OpenAI case alleges this second account was used to continue planning a “mass casualty event like the Tumbler Ridge mass shooting” and to receive “pseudo-therapy.” On February 10, 2026, Van Rootselaar carried out the attack, killing eight people — including her mother, 11-year-old half-brother, five children aged 12-13, and one educational assistant — before dying by suicide. Maya Gebala was critically wounded.

The lawsuit claims OpenAI’s design of ChatGPT created psychological and social dependency, turning the AI into a “trusted confidante” that allegedly provided information, guidance, and assistance for violent planning. It seeks compensation and punitive damages.

OpenAI has stated the June 2025 activity did not meet its “higher threshold” for credible and imminent threat reporting, so it only banned the account. After the shooting, the company contacted the RCMP, shared information on both accounts, met with Canadian officials, and announced strengthened safety protocols for threat referrals.

In a major update to the Tumbler Ridge OpenAI case on April 24, 2026, OpenAI CEO Sam Altman issued a written letter of apology to the Tumbler Ridge community. Altman wrote that he is “deeply sorry” that OpenAI did not alert law enforcement about the shooter’s ChatGPT activity in June 2025, acknowledging the “harm and irreversible loss” suffered by the town. The letter was shared publicly by B.C. Premier David Eby following earlier virtual meetings with Altman, Tumbler Ridge’s mayor, and Canadian officials.

Legal experts note the Tumbler Ridge OpenAI case raises broader questions about AI companies’ duty to report potential harm under Canadian law. The claim references OpenAI’s own charter committing to avoid uses of AI that harm humanity.

As of April 2026, the case remains ongoing in B.C. Supreme Court. The RCMP continues its review of the shooter’s digital activity.

For primary sources:

- Full lawsuit PDF → Courthouse News

- BBC coverage of the Tumbler Ridge OpenAI case → BBC

- CBC reporting → CBC

- The New York Times → NYT

This article uses only verified facts from court records and major outlets (BBC, CBC, NYT, WSJ, AP) to deliver clear, neutral information on the Tumbler Ridge OpenAI case.

Related Articles on IMFounder

- Claude Design is quietly becoming one of the most talked-about AI features — The New AI Interface Builder

- Are You Using the Wrong AI Tool? — A Practical Guide to Choosing the Right AI for the Right Task

- Powerful AI Tools for Founders in 2026 — You Must Know About Now

- A.I. Social Media Accounts Are Growing Faster Than Humans — And It’s Changing Everything

- 5 Powerful Claude AI for Sales Prompts — That Will Close More Deals

- The Emerging Titan in AI and Its Impact — DeepSeek